here are six ways

here are six ways AI threatens science: fake grants, fake grant

reviews, fake articles, fake data, fake image forensics, and fake peer reviews.

That's the impression you'd get from reading the science press. But as with any

technological advance, there are both benefits and costs.

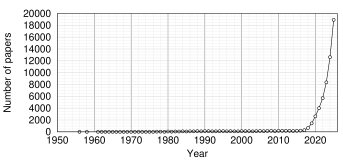

As of today, there are 48,242 scientific articles with AI or artificial intelligence in the title, including one on AI applications for knee surgery, one on AI evaluating residency application personal statements, and one on AI in gastrointestinal endoscopy. While it might someday be essential, AI has become the latest fad in science.

Classic fad curve Papers per year listed in PubMed with AI or Artificial Intelligence in the title (2025 data point is estimated)

Detecting fake data by AI

Two of the newest applications of AI are in evaluating grants and papers and in detecting data manipulation. One article [1] compared abstracts written by ChatGPT and Bard for quality, using—yep, you guessed it—AI to determine which was better. They concluded that ChatGPT was better than Bard at meeting journal guidelines:

The AI-detection program predicted that 21.7% (13/60) of the human group, 63.3% (38/60) of the ChatGPT group, and 87% (47/54) of the Bard group were possibly generated by AI.

Another use of AI doing a circular analysis of its own output is in detecting fake results generated by AI. Gosselin [2] found that different AI fraud detectors for AI-generated Western blots disagreed with each other and were unreliable. However, Mandelli et al. [3] obtained a different result, claiming an accuracy rate of up to 84–92% in detecting them. Hartung et al. [4] concur, finding that AI-generated histological images are now so good that experts can't tell the difference. The problem, as always, is that it's tough to know what the AI is actually looking at. Is the algorithm really detecting artificiality, or is it noticing some irrelevant difference, such as a difference in contrast or noise?

It's practically a tautology: if the AI could detect a difference, it's proof that the AI-generated training images were not good enough to pass as real. To get a meaningful result, the authors would have had to use real hand-manipulated images from the literature. Of course, real fake images are much harder to find; fake fake images are easy.

Using AI to write fake papers

“Fake” and “AI” are now virtually synonymous. Májovský et al [5] found that ChatGPT-E could generate “highly convincing” scientific articles on neurosurgery, the only problem being that AI generates lots of fake citations—even more, presumably, than the typical author. One commenter noted that peer review fraud has evolved out of the need for reviews, as reviewers are now (it is claimed) drowning in the workload from fake papers. Since an unknown percentage of them are generated by AI, it's again a question of AI detecting its own dirty work. It's a positive feedback cycle that could turn AI into an electronic navel-gazing system, giving us a different kind of ‘singularity’ than we expected.

Using AI to detect ‘fake’ journals

A new article [6] claims that their AI has detected over a thousand new ‘questionable’ journals. However, the authors admit that it has a false-positive rate of 24%. The number of questionable journals drops dramatically when the criteria are tightened. The authors speculate about their origin:

The lack of oversight by professionals, such as librarians vetting the subscriptions to journals, in addition to the pressures to publish and the direct financial contributions of authors in the form of author processing charges (APCs), created the perfect storm for questionable venues to appear.

This concern is echoed by Nature magazine [paywalled], which has long been worried about loss of revenue from libraries and individuals canceling their subscriptions. ‘Questionable’ journals threaten subscription-based journals and science magazines. Hence the crusade against predatory journals is not completely free of self-interest.

The paper is not convincing, however, because the criteria were arbitrary and somewhat contradictory. Here are the biggest factors for a good journal:

Number of institutions citing the journal

Joural self-citation count

h-index of first, middle, and last authors

The strongest criteria for a bad journal were

Number of author affiliations per publication

Year of first publication

Author self-citation count per publication

Number of authors per publication

Average h-index of the authors

In other words, self-citation count was bad if the author did it, but good when the journal did it. The h-index of the first, middle, and last authors signified a good journal, but their average signified a bad one. This sounds like the sort of contrived differences that an LLM might come up with.

How to fix the ‘fake’ journal problem Submit your papers only to journals whose articles you cite. That's what scientists have always done, and it works well.Editors of print journals are obviously worried, but it's hard to see the downside for a researcher. Fake journals drive down prices and siphon off bad research. Open access journals encourage science literacy among the public and healthy skepticism about bad science. If the fake journal does its own fake peer-review of its fake articles using AI, that means less work for the researchers. Researchers will submit their papers mainly to journals whose articles they cite and ignore the rest. That's what scientists have always done, and it works well.

Using AI to decide funding

Science mag is a little more critical about the risks and benefits of AI, saying that funding agencies are starting to use AI to decide which grants to fund. They ask: Is this really a good idea?

They quote economist Ramana Nanda as saying that when venture capitalists tried to use AI to decide which startups to fund, the AI selected startups that had the same ideas as earlier ones, thereby guaranteeing the new ones would fail. The implication is that this will also happen in science.

Where ‘AI’ could be useful is helping humans understand the previous work by the applicants. It's important to know, for instance, what experience the applicants had and whether they have already published the results for which they're requesting funding—or whether they have published results that contradict them, which I've seen happen more than once. Reviewers often don't check for this.

AI-generated summaries have started turning up on discussion boards when someone wants to sound knowledgeable. They're easily recognized. Readers just skip over them, knowing they're probably full of errors. This is again useful: the public is recognizing that a professional tone, prolixity, and lots of em-dashes without spaces are no guarantee of accuracy.

Where AI is not useful is when it's used to circumvent anti-discrimination laws, where the AI is instructed to prioritize badly designed projects to ensure equity funding of “under-represented groups.” The temptation to use AI for political gain would be irresistible to those who think certain groups have a disadvantage; however, there is little evidence to back this up, and no evidence that discrimination helps them. What it will do is discredit science by making it appear political.

Using AI to do peer-review and review grants

Another area where it's actively harmful is in grant peer review. Grant reviewers will almost certainly take the ten or so grants the agency dumps on them and run them through an AI instead of reading them carefully. Their comments will be based on whatever the AI tells them.

This is harmful because a grant proposal is a highly stylized and constraint-driven piece of writing. First, the applicants must conceal any new ideas and implications of their findings so the reviewer can't steal them. If they're describing a new drug they know will have harmful side effects, such as causing cancer, they must conceal that as much as possible, either by misdirection (e.g., saying it “could” be useful as a treatment for cancer) or by misquoting the literature. And they must spin the project to fit the current fads and the prevailing narrative. These three tricks are common in grant proposals, and an AI is unlikely to detect them.

For example, when generating cell-specific knockouts was all the rage in biology, most grants we reviewed said they planned to use this technique. Those that selected techniques that were too novel or too unfashionable invariably got triaged, which means they were dumped into the pile of the 50% of grants that don't get considered. The applicants, of course, knew this, and only a few tried to buck the herd.

Since the current fad is AI, it's a safe bet that grants proposing AI will be described as “cutting edge” and highly scored; those proposing older but established algorithms, like PCA (principal components analysis), will get trashed.

Indeed, one colleague told us that PCA is unreliable and suggested that we remove it from our grant. The solution is simple: do a global search and replace it with “convolutional neural network classifier.” Never mind that the AI is not just a neural network, but could well be using PCA as a back end because it's more reproducible.

Using AI in this context would force the applicant to use AI in writing a grant: “Write me a grant that ticks all the boxes that a reviewer might look for, while including everything in the program announcement. Oh, and pick a nice project for me.”

There was an episode of the sci-fi cartoon Futurama where a scientist did just that. One day he got a knock on the door and somebody walked in and handed the Nobel prize to his computer. The ultimate effect of AI could be: life imitates cartoon.

[1] Kim HJ, Yang JH, Chang DG, Lenke LG, Pizones J, Castelein R, Watanabe K, Trobisch PD, Mundis GM Jr, Suh SW, Suk SI. Assessing the Reproducibility of the Structured Abstracts Generated by ChatGPT and Bard Compared to Human-Written Abstracts in the Field of Spine Surgery: Comparative Analysis. J Med Internet Res. 2024 Jun 26;26:e52001. doi: 10.2196/52001. PMID: 38924787; PMCID: PMC11237793.

[2] Gosselin RD. AI detectors are poor western blot classifiers: a study of accuracy and predictive values. PeerJ. 2025 Feb 20;13:e18988. doi: 10.7717/peerj.18988. PMID: 39989748; PMCID: PMC11847483.

[3] Mandelli S, Cozzolino D, Cannas ED, Cardenuto JP, Moreira D, Bestagini P, Scheirer WJ, Rocha A, Verdoliva L, Tubaro S, Delp EJ (2022). Forensic Analysis of Synthetically Generated Western Blot Images. IEEE Access, vol. 10, pp. 59919–59932, 2022, doi: 10.1109/ACCESS.2022.3179116. Link

[4] Hartung J, Reuter S, Kulow VA, Fähling M, Spreckelsen C, Mrowka R. Experts fail to reliably detect AI-generated histological data. Sci Rep. 2024 Nov 19;14(1):28677. doi: 10.1038/s41598-024-73913-8. PMID: 39562595; PMCID: PMC11577117.

[5] Májovský M, Cerný M, Kasal M, Komarc M, Netuka D. Artificial Intelligence Can Generate Fraudulent but Authentic-Looking Scientific Medical Articles: Pandora's Box Has Been Opened. J Med Internet Res. 2023 May 31;25:e46924. doi: 10.2196/46924. PMID: 37256685; PMCID: PMC10267787.

[6] Zhuang H, Liang L, Acuna DE. Estimating the predictability of questionable open-access journals. Sci Adv. 2025 Aug 29;11(35):eadt2792. doi: 10.1126/sciadv.adt2792. Erratum in: Sci Adv. 2025 Aug 29;11(35):eaeb8642. doi: 10.1126/sciadv.aeb8642. PMID: 40864719; PMCID: PMC12383260. Link

sep 01 2025, 4:34 am. minor updates sep 02 and sep 03 2025

Hardly any AIs were harmed in writing this article.