new paper came out this month claiming that even “visual professionals” are

now having difficulty telling the difference between real and fake AI-generated

images. Only 54.35% of the AI images of human portraits were correctly

identified as such by a population of 161 volunteers, while 79.81% of the

authentic images were correctly identified as real. [1] Pros

were only marginally better than normal people at spotting a fake.

new paper came out this month claiming that even “visual professionals” are

now having difficulty telling the difference between real and fake AI-generated

images. Only 54.35% of the AI images of human portraits were correctly

identified as such by a population of 161 volunteers, while 79.81% of the

authentic images were correctly identified as real. [1] Pros

were only marginally better than normal people at spotting a fake.

The images the authors showed suggest that the situation may not be as dire as they thought. Of the human portraits they showed from DALL-E 3, Firefly, and Midjourney, only the Firefly image was hard to identify unambiguously as fake.

Because Firefly produced the most convincing fakes, I tested it myself and compared the results with authentic images. The first two images below are authentic pictures of a young fox eating a cicada, taken with a D7000 camera in my back yard. In the first image, a small part of the cicada's wing can be seen in the fox's mouth. The second one is overexposed but the cicada is easier to see.

In my first attempt at an AI picture, prompting with “a fox with a cicada in its mouth” (Fig.3), the fox was older but still plausible; but cicada did not appear realistic, possibly because I mistyped ‘cicada’ as ‘cidada.’

1. Authentic photo of a fox eating a cicada

2. Authentic photo of a fox eating a cicada

In my next attempt (Fig. 4), I changed the prompt to “a fox eating a cicada,” taking care to spell it correctly. Several points are worth noting: first, the fur color and eye reflections were improved. The fox also appeared happier. However, the stance of the fox is strange and the computer seemed to have trouble with the concept of ‘eating’ and got the relative scale of the two animals wrong. Both images were easy to identify as fake because the morphology, scale, and relative position of the animals were wrong.

3. AI generated photo using the prompt “a fox with a cidada in its mouth” induced the computer to hallucinate a new species. So now you know what a cidada looks like (Firefly)

4. AI generated photo using the prompt “a fox eating a cicada“ (Firefly)

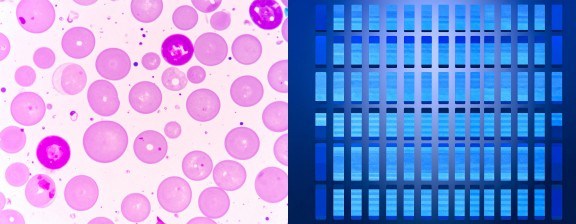

5. AI-generated images from the prompt “western blot” (left) and “western blot stained with chemiluminescent reagent” (right) look nothing like real Western blots (see here for a real example)

Sean Guo et al. [2] gave three tips on spotting fake images: abnormal details such as strangely positioned limbs, often in the background; incoherent or misspelled text in an image; and sharp foreground but blurry background, which the computer uses to shorten rendering time by omitting unnecessary details. I'd add a few more: excessively smooth and homogeneous features, excessively sharp rendering on the eye area, and an absence of undersaturated or oversaturated regions. In a real image, features in dark regions are usually fuzzier or more grainy, while a fake image will have perfect detail throughout the image.

It's not just bugs depicted as mutant alien molluscs. A fake image can also be identified by its context. If a human face looks like something from a toothpaste commercial, that's a clue. An AI has no concept of a soul, so a person's expression may appear bland, unusually animated, or with a contorted body position. Another clue is if the image just magically happens to align with whatever narrative somebody happens to be pushing: in some places, photos of Putin or Trump will be made as unflattering as possible. Women may appear with impossibly smooth airbrushed complexions and impossibly unmanageable hair.

There's a fine line between AI, photoshopping, and deliberately mislabeled images. Just recently a photo of a ‘starving’ child widely circulated in the tabloid press was revealed actually to be a photo of a baby with a serious genetic disease.* In each case, it's the intent that makes it dishonest.

There's a lot of concern that AI could be used to create fake scientific data. My tests showed that science seems to be safe for now. Fig. 5 shows the result from the prompt “western blot.” I have no idea what those pink things are supposed to be. You'd get laughed out of science if you tried to use these images in a paper.

Image forensic analysis of the fake animal images shown here didn't show any obvious differences other than a smoother background with perfect bokeh and absolutely zero chromatic aberration (where white objects in the corners appear to have red fringes on one side and blue fringes on the other). The histograms looked normal for both images (i.e., a reasonably continuous distribution of red, green and blue values), and there were few regions of excessive noise or smoothness besides the background. These would have shown up clearly in smoothness analysis, zebra colormap, and contour mapping. Densitometric traces didn't show any obvious difference.

Even so, any published image generated by AI should be labeled as “AI generated” or as a “cartoon.” This is especially important in science, where images of amusingly well-endowed rats have recently turned up. That may seem harmless, but fake images can rapidly erode the credibility of science. This can already be seen with ‘artists' renderings’ of dark matter, space phenomena, and exoplanets published by NASA, many of which aren't properly labeled as computer-generated art. Even false-color images need to be prominently labeled, as people are accustomed to thinking that a photo resembles the actual object; breaking that connection encourages cynics to claim that the Moon landing was a hoax and that most scientific findings are fraudulent.

Another example was when images appeared several years ago when Syria was accused of killing people with chlorine gas. Evidently the publisher thought the cloud was chlorine, not dust, so the picture must be green, and made it so, not realizing that quantity of chlorine would have killed the photographer. The obvious fakeness makes people ask: if it's true, why is it necessary to fake it?

In all likelihood, AI won't be a big change for the press. A great many people already don't believe a thing the news media say. You can't get lower than zero credibility. But it would be a shame for science to follow down the same path.

Even NPR tells us to be cautious of images that confirm our existing biases. Oh, and one more thing. The first person who suggests sending images to ChatGPT to ask whether it's real or not gets a pixel right between the eyes. And yes, that would hurt . . . a little.

Update, Aug 05 2025: This is not to imply that the NYT is or is not a tabloid. The photo originally appeared in the UK tabloid Daily Express and was picked up by other sources, including the New York Times. The suspect photo was later replaced in another tabloid by similar photos, apparently of the same child.

[1]Velásquez-Salamanca D, Martín-Pascual MÁ, Andreu-Sánchez C. Interpretation of AI-Generated vs. Human-Made Images. J Imaging. 2025 Jul 7;11(7):227. doi: 10.3390/jimaging11070227. PMID: 40710614; PMCID: PMC12295870.

[2] Guo S, Swire-Thompson B, Hu X. Specific media literacy tips improve AI-generated visual misinformation discernment. Cogn Res Princ Implic. 2025 Jul 3;10(1):38. doi: 10.1186/s41235-025-00648-z. PMID: 40608186; PMCID: PMC12229391.

jul 30 2025, 5:07 am. updated aug 05 2025