|

book reviews

Sociology booksreviewed by T. Nelson |

|

book reviews

Sociology booksreviewed by T. Nelson |

Reviewed by T. Nelson

I pride myself in being a really boring person. I consider Philip Glass's music not repetitive enough. I got rid of my gray Volvo four door because it was too flashy. Even my dentist agrees with me, calling me a dull, dull, dull ... open wider ... dull, boring, dull person. I'm so boring that when somebody says I'm boring it's the high point of the day.

So maybe that's why I liked Counterfactuals and Causal Inference so much. The whole book is full of sentences like this:

Moreover, the instrumented variable (IV) literature associated with the counterfactual approach has shown that, in the absence of such heterogeneity, IVs estimate marginal causal effects that cannot then be extrapolated to other segments of the population of interest without introducing unsupportable assumptions of homogeneity of effects. [p.449]

In fact this is a marked improvement for social science (which the authors say is a distinct branch of sociology): while perhaps not Shakespeare, the writing in this book is impressively clean and professional, with no trace of the obsession that some of their colleagues have with polysyllabic lucubrations or with twisting the language to sneak their opinions into the text. A non-ideological approach is bound to help their case: in science there's no room for opinions of any kind. Social theorists who ignored this principle invented vast social schemes that failed spectacularly in the real world, sometimes at enormous human cost.

What is their case? The authors say that descriptions and correlations, valuable as they are, need to be complemented by deeper mechanistic explanations. This idea is new in sociology, going back only to a seminal 2000 book (or paper, they're pretty much the same in this field) by Judea Pearl, who advocated using—wait for it—counterfactuals and causal inference to distinguish cause and effect.

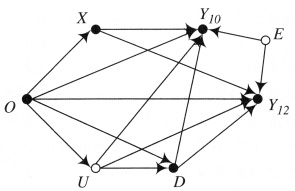

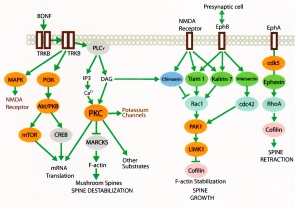

That's why, as a biochemist, I must admit my eyes glazed over a few times reading about these causal graphs. We've been using them for about a century now (see diagram below). You can't publish in a good journal these days by just watching your cells and describing what happens. You have to intervene: knock out an old gene or induce a new one, then describe what happens. I was hoping for some magic statistical test I could use in my work to prove causation, but there's nothing like that here.

In fairness, in the social sciences doing interventionist experiments is much harder and more expensive than for us; instead of zapping a gene, sociologists might have to zap a whole government program. But the concept is the same: to establish causation, you need a hypothesis, a control group, and an intervention that changes something. All other factors must be randomized so they cancel out. With these three things in hand, you can put a causal mechanism on solid ground, one arrow at a time.

I recommend reading chapters 1–3, then 11–13, which are more philosophical, before diving into the main part of the book (Ch. 4–10).

A central principle here is the “stable unit treatment value assumption” or SUTVA, an acronym that even sociologists make fun of, and which is what everyone else calls “independence.” It means that the way one subject responds is independent of how the others respond, and also independent of whatever the mechanism is.

Morgan and Winship look to clinical drug trial design as a model for how to design good experiments. The best one is the crossover design, where the subjects get one treatment for a while, then switch places with the control group. This cancels out the confounding factors*, but it's tough to do that in sociology. Besides confounding factors, where two different causes produce the same effect, there can be back-door causes, where a cause produces the effect by a secondary, more circuitous route. Much statistical analysis is needed to sort these out; unless you can truly randomize the population, you'll never be sure the mechanism is real.

So I have to say this book was not quite boring enough for me. Maybe I'll read Causal Inference for Statistics, Social and Biomedical Sciences by Imbens and Rubin next. That one has lots more equations in it. Woo-hoo!

Many sociologists take issue with these new principles, saying they're too restrictive. But the goal of making sociology more scientific is highly laudable. And there's a bonus: if the new paradigm sticks, all those Marxists in the sociology department will have to go out and find a job.

* It's also the most beautiful design, because all the subjects get a chance to be treated with the drug, and we get to monitor half of them for longer-term adverse effects. But it also costs more.

mar 26, 2017

Reviewed by T. J. Nelson

“Information is sticky!” exclaims César Hidalgo in this very light book. He talks about entropy and Shannon's information theory in a nontechnical way, making it understandable for any high school student with no background in science or economics. He then considers complexity as interchangeable with information (technically it's not: complexity has a specific mathematical meaning).

On page 52 he finally gets around to his topic: complexity and information in economics. He tries to create memorable soundbites, like “products are crystals of imagination” and “personbyte”, which means the maximum knowledge and knowhow carrying capacity of a person. Different countries, he says, have different abilities to ‘crystallize’ information.

Next he talks about networking, as in market interactions. The cost of market interactions is reduced by technology and by improvements in standards. He says (p.103) that by raising interaction costs, “[e]xtreme bureaucracy generates large networks containing many people but few personbytes.” (This could be true, but considering that neither networks, bureaucracy, complexity, nor personbytes in a society can be measured, proving it might be a challenge.)

Knowledge and knowhow are organized in hierarchical patterns, he says, which means that “industry-location networks are nested, and countries move to products that are close by in the product space.” To back this up he shows a number of graphs purporting to demonstrate a correlation between GDP per capita and economic complexity. He says he used the “full mathematical formula” to calculate this, but doesn't say what that is. It probably doesn't matter, because it seems more likely to me that a high GDP would cause economic complexity than the other way around. The theory so far seems to be struggling to add something to what we all already know.

Mostly handwaving here. It's a typical pop economics book, much like Freakonomics only lighter and not as controversial. He's trying to come up with new concepts, and that's always good. But I have a feeling these new terms are too cute to get much traction.

jun 19, 2017