Introduction

hroughout my career, I have done lots of mathematical modeling, including neural networks and my current project, molecular modeling. It's interesting work, but there are risks. Over-enthusiastic modelers destroyed the credibility of the entire neural network field not once, but twice.

The first time was back in the 1960s. MIT computer scientist Marvin Minsky famously brought down the house of cards by proving that a model called the Neocognitron could not handle something called the parity problem. The parity problem is a pattern that contains an even or odd number of patterns. For example, a computer may be presented with a picture of many bananas. The task is to determine whether the number of bananas is even or odd. Minsky proved that the models were unable to perform this task.

The field eventually rebounded, as researchers gradually realized that inability to solve this problem was a feature, not a bug, because the human brain cannot handle the parity problem, either. We humans have to laboriously count the bananas and determine the answer symbolically. Gradually the field regained respectability. But it crashed again after people once again became too enthusiastic about their models. We had to abandon the field and do something else.

Today, neural networks are a backwater. Some research still goes on, and a few algorithms have made it into the computer science courses, but they are no longer cutting-edge.

What modeling does and does not show

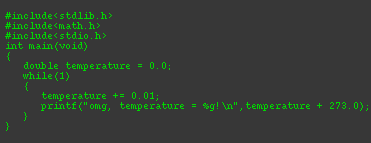

Some commentators have claimed that modeling is not scientific. This is not true. Modeling is a way of testing your hypothesis. It tells you that the computed effect could happen if your assumptions are correct, and if the mechanisms you put in the code are correct. There are many times when this is very valuable. It is like a sanity check for a theory. If you are unable to get the computer to produce the predicted effect, it means there is something wrong with your theory or with your logic. A computer model is never more than a theory until the data confirm it. To base policy decisions on a model would be sheer lunacy.

A good way to understand it is by comparing it to the American TV show Mythbusters. Of course, the Mythbusters are entertainers, not scientists, and they sometimes do things wrong. Occasionally, they do everything wrong. When they confirm the predicted effect, it proves that it's possible. When they don't, it doesn't mean anything.

Computer models are the same way. If they don't work, the conclusion is meaningless. Even if they do work, it is little more than a proof that, when the assumptions are realistic, it could happen. If only those Mythbusters showed more awareness of that, my TV would have a lot fewer dents in it.

Fraud

Some of the problems with models can be attributed to incompetence or missionary zeal. More disturbing is when results are presented as science that are actually fraudulent. Fraud is particularly tempting when the science is controversial.

Nature may have pulled a trick on the global warming movement, with that 17-year lack of warming, but this time the warmers tricked themselves. The warmers, caught up in missionary zeal, tried to “hide the decline,” suppressed alternative theories, and even in some cases falsified their results. This did more than make a few climatologists look incompetent. It threatened the credibility, and the continued existence, of an entire branch of science.

It doesn't matter how much precision you put into a model, or how many teraflops you throw at it. A model can never give you a scientific result. A model is never more than a theory—not much more than a sophisticated hunch—until the data confirm it. To base policy decisions on any computer model would be sheer lunacy.

We used models a lot in neuroscience when we were desperate for clues about how the brain works. At first the models looked promising. But eventually people realized that there could be no unique solution. The same effect could be produced by a vast multitude of assumptions. This is just as bad as not producing the effect at all.

Toward the end, the neural network models started to become more and more extravagant in their claims. Just as it happened later in climatology, researchers began to compete to produce bigger and bigger effects. Some researchers made claims that exceeded the bounds of credibility. Models that claimed modest, reasonable results became unpublishable. The bad drove out the good. Within a few years, most of the top researchers pulled out and the entire field was discredited.

Models vs. calculations

Models are not to be confused with calculations. A calculation is a model when the real phenomenon cannot be observed, or when the real mechanism is unknown. An example in astrophysics would be a hydrodynamic model of a black hole. This model isn't a wild guess, but it can't be taken as anything more than a theoretical prediction that may or may not be accurate. The theory can be overturned in an instant.

If data confirm the prediction, models may eventually become theories. But if climate models predict warming, or cooling, or whatever, and, by some miracle it actually happens, it simply means it could have happened the way the model predicted. If it doesn't happen, it doesn't mean the theory is necessarily wrong (although there's a good chance of that). Most of the time, as when the Mythbusters are having fun blowing stuff up, it doesn't mean anything at all.